Engineering Internship Spotlight: Temporal Replay through CircleCI

Behind the Scenes • Published on August 22, 2022

Convoy has begun automating shipper-facing tasks with a tool that leverages workflows on Temporal, an open source platform providing reliable program execution. These workflows are often long running, as they rely on frontend user input to complete the steps. However, it is not always a smooth process.

The problem

In one instance, code changes pushed to workflows affected the result of active workflows, as Temporal immediately incorporates code changes at runtime without versioning. Because of this, Temporal requires all workflows be deterministic so the change caused all actively-running workflows to hit a non-determinism failure. Since Temporal workflows automatically retry on failure, all the workflows were stuck in a retry loop. New workflows could not start as old workflows starved out resources on workers (processes that pick up work to complete workflows) leading to all workflows needing to be terminated. As a result, the efforts put in by frontend users to complete the workflow tasks were wasted, and production was halted until the issue was addressed.

This is an issue I aimed to prevent when I took on my project this summer as an intern on the Platform for Automation and Workflows (PAW) team: building an automatic validation tool for other engineers to confirm that only deterministic changes would be deployed.

Through this workflow tool, engineering teams across Convoy can build and iterate automation of predictable business processes, and easily present work to frontend users when automation is hard. The workflows are built with Temporal’s tools, but because it is a new concept to most developers, and whole teams collaborate to build out their workflows, it can be difficult to keep track of all workflow dependencies to predict how changes will affect other workflows. Thus, we can see how important it is that developers know whether or not their changes break determinism before deploying their code to production.

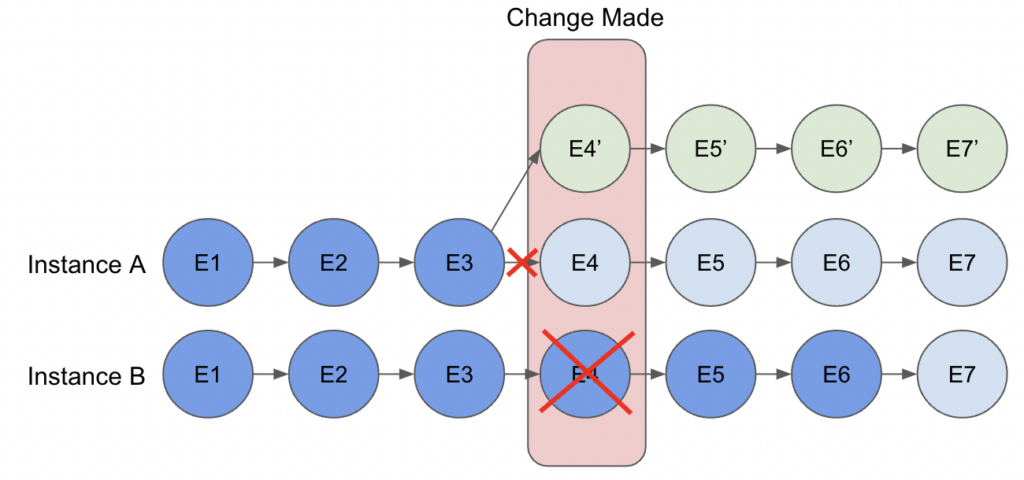

While changes can be made if there are no running workflows — as that means all have completed, or none have run, and changes can be made at a point where the running workflow instance has not yet reached — this becomes more complicated with multiple instances of long-running workflows.

Consider the situation where there are 5 running workflow instances of one workflow type with each one having reached a different point in execution. Assume workflow instance A has completed 3 events and instance B has completed 6. If we were to deploy a change to the workflow code around event 4, then instance A would reflect this change, whereas instance B would see that this change would end in a different result and throw a non-determinism error. On the other hand, we cannot stop all running workflows, as we would lose their states, resulting in potentially weeks of lost work. In addition, workflows can be restarted at any point as Temporal provides retries in case of system failure. For both these reasons, workflows must be deterministic and produce the same results with every run and re-run.

The solution

Temporal provides a tool to solve this: Replay Workflow History, which reruns a previous workflow’s history against the new code to verify that the new event history is as expected. To use it, developers needed to connect to the Temporal database, get a specific workflow ID of interest, and execute commands to fetch the workflow history and run the replay themselves. Leveraging this function within Convoy’s existing architecture and pipeline was tedious and required developers to remember to run the steps prior to merging their changes.

To solve the problem of an easy-to-miss and burdensome process, this project integrates Temporal’s workflow replay feature with the CI/CD pipeline to ensure that on every change pushed to Github, the changes are automatically validated and verified that they do not break determinism. This improves both the speed and quality of development, as engineers get instant feedback on whether their changes are breaking, preventing outages and failures in production.

Diving deeper

We first needed to gather all workflow types to replay against. Fortunately, we have an internal object storing all runnable workflows, and developers must update it before the workflow can be picked up by workers, so we used this object as the source of truth for our replayable workflow types.

Once we had the workflow types, our next step was to give Temporal database access to our CI/CD pipeline to retrieve workflow IDs and event histories to replay against, which included setting up a new certificate and key for this specific use case.

With the Temporal connection established, for each workflow type, we used Temporal’s querying API to get the IDs of the 5 oldest running workflow instances. We chose these parameters because:

- If a workflow has stopped running, either because it has completed or terminated, then it is not relevant in the determinism check because any new code will not cause it to hit an error or affect the result of the workflow.

- A more-progressed workflow would be more likely to catch any non-determinism changes as more code has likely been covered.

- We wanted to cover as many possible branches of logic as possible without replaying every running workflow. Choosing the magic number of 5 was a tradeoff between execution time and thoroughness.

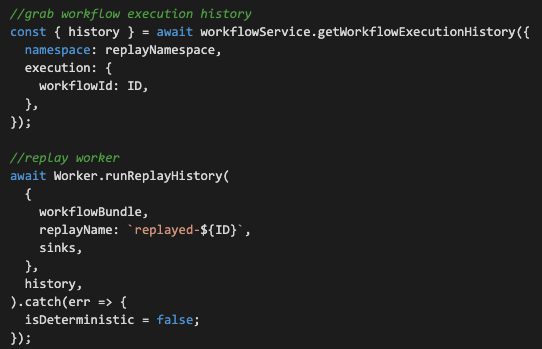

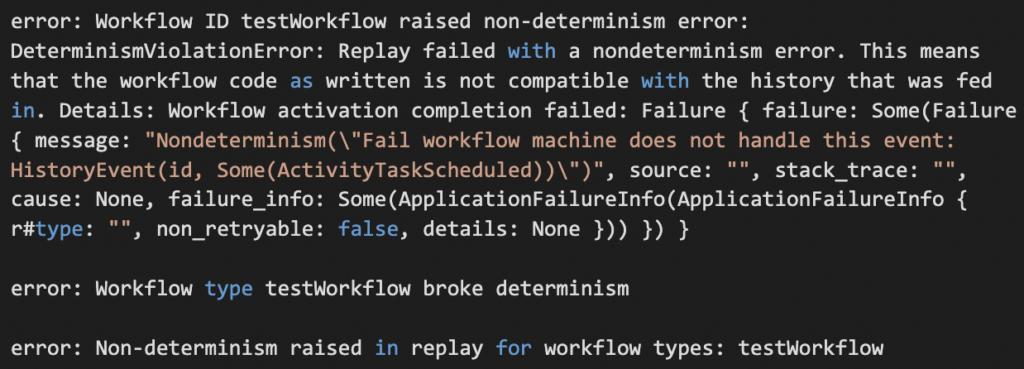

From the workflow IDs, we grab workflow event histories and use that to kick off the replay. The `Worker.runReplayHistory` call spins up a worker and either completes silently or throws a non-determinism error. We can see these two functions in the code snippet below:

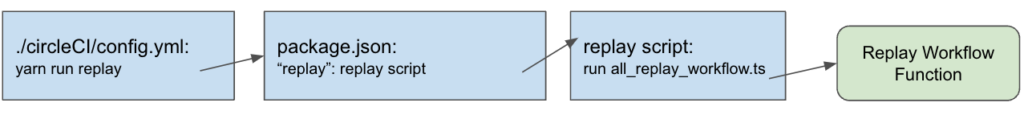

To connect the replaying to CircleCI, we used a script to run the replay functionality and ran that script from CircleCI. This allows pretty much all code changes for this project to be contained to one repository, which is beneficial for visibility and maintenance. The connection is set up as so:

Optimizations

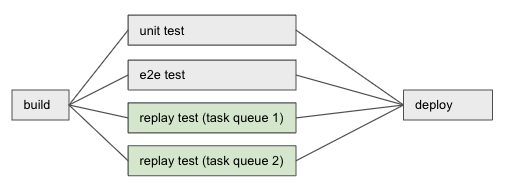

To prevent the replay test from taking too long and being a blocking factor on deployment, we split up workflow replaying by creating one CircleCI step for all workflows that belong to the same task queue, which is beneficial because CircleCI runs steps in parallel.

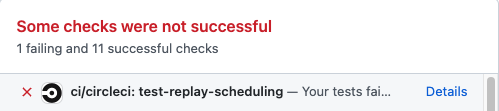

We also wanted to maximize visibility and understandability for this replay test, so if any workflow replay within a CircleCI step throws a non-determinism error, the CircleCI test will fail and the developer will see the error message that lists all non-deterministic workflow types.

The CircleCI test shows:

Once we had the CircleCI integration working, we configured it to run on every branch of repositories that include workflow code.

Below is a dependency graph of how our CI/CD pipeline executes. Note how the two test-replay tests run in parallel with each other and with unit and end-to-end tests.

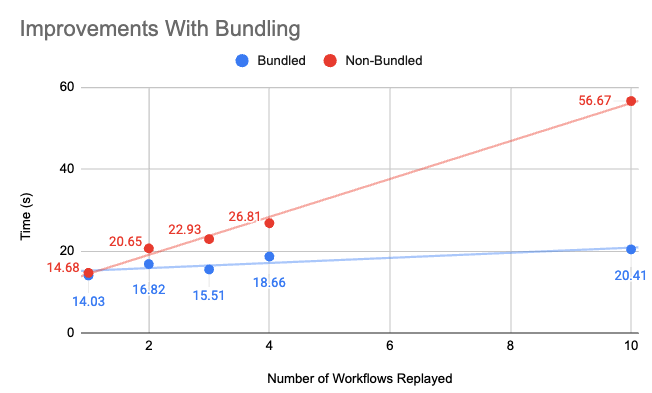

From there, we tried to find ways to speed up the process. We noticed that the majority of execution time was taken up by initialization of the workers used in the replay; querying for workflow IDs and grabbing their histories were relatively quick operations. To reduce this wall-clock time, we made use of Temporal’s ability to pre-build a workflow code bundle prior to Worker start. Passing the bundle to the worker reduces startup time since the worker no longer has to bundle the code at runtime. Because each replay kicks off its own worker, we saw immense improvement in execution speed:

We also considered being more selective about the workflows that are replayed. Currently, when any change is pushed to GitHub, even if no workflow code is edited, the replay tests are run for every workflow. We understand that this is not necessary, and ideally, we could identify which workflows need to be verified depending on the code that is modified in the commits.

However, we felt that with code bundling, the workflow replay tests were fast enough that further optimization was not a priority.

The reality of working with new open source tools

While we were completing this project, temporal released version 1.0.0 of their Typescript SDK, demonstrating that even though we were working with a tool that was still in development, it had many useful and interesting features. The Temporal engineers were excited about this project and interested in receiving feedback to improve their tooling and documentation. This project would not have been possible without their help and partnership!

Conclusion

With the completion of this project, we are able to give developers confidence that their changes to workflow code will not break determinism in production. As new engineers onboard onto the workflow tool and learn the Temporal paradigms and familiar engineers update workflows to support new scenarios, this tool will greatly speed up their velocity, and has already been a helpful guide.

Building this tooling offers exciting capabilities for Convoy and for other teams using Temporal’s open source software. I’m excited to see how this project impacts future work. Shoutout to my mentor Rupali Vohra and manager Brian Holley for the help on this project, other members of the PAW team, the summer interns, and Convoy who have all given feedback and support throughout my time this summer!